Inside the $37 Billion Scam Economy: Industrial-Scale Fraud and the Global Race to Respond

- TrustSphere Network

- May 17, 2025

- 4 min read

What if one of the world’s fastest-growing criminal economies wasn’t hidden in the shadows of the dark web—but operating openly across Southeast Asia, powered by AI, driven by crypto, and run like a multinational corporation?

That’s the sobering picture emerging from recent investigations by the UN Office on Drugs and Crime (UNODC), Europol, the FBI, and financial intelligence units across Asia. Scam operations have evolved beyond petty online cons. Today, they are highly organized, cross-border criminal enterprises generating over $37 billion annually—a figure rivalling the GDP of some smaller nations.

These operations aren’t just growing in size. They are becoming more sophisticated, scalable, and industrialized—with Southeast Asia now widely regarded as the epicenter of this new criminal economy.

Phase 1: Scam Compounds as Industrialized Crime Networks

The UNODC’s Inflection Point report (April 2025) reveals the dark mechanics behind this economy: vast scam compounds in Myanmar, Cambodia, and Laos, where tens of thousands of trafficked workers are forced into running online scams. These aren’t fringe operations; they’re sprawling cybercrime factories, often housed in glitzy office towers or guarded SEZs, complete with KPIs, coaching scripts, and real-time dashboards.

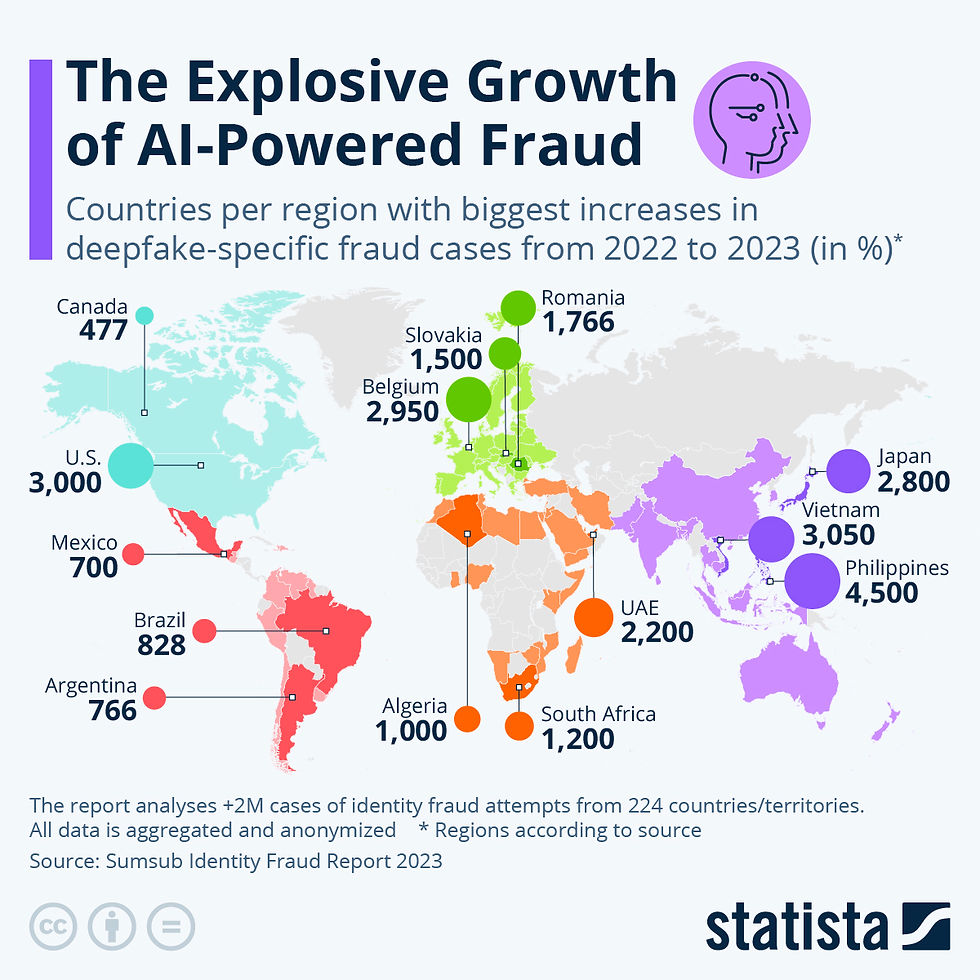

Victims are lured globally—across the U.S., Europe, India, Singapore, Australia, and beyond—into a range of scams: from fake crypto investments and AI-generated romance scams, to deepfake job recruiters and “get-rich-quick” schemes.

📍 Case Study – Cambodia

In 2024, a joint UN-Cambodian task force uncovered a scam operation in Phnom Penh’s outskirts that mimicked a full-fledged BPO centre. Inside were more than 1,200 workers—many trafficked from mainland China, Vietnam, and the Philippines—forced to impersonate investment advisors targeting victims across North America and Europe.

These industrialized operations use corporate playbooks: onboarding scripts, lead scoring, team performance incentives, and even chatGPT-like language models trained on real conversations to increase conversion rates.

Phase 2: Deepfakes and AI Are Breaching Financial Defenses

As generative AI tools become more accessible, criminals are using them to bypass financial controls at scale.

According to Europol’s Biometric Vulnerabilities report (April 2025), AI is now routinely used to defeat biometric security and KYC onboarding systems. Deepfake “presentation attacks” can fool liveness checks, while voice clones are used to impersonate customers during phone-based ID verification.

Voice cloning: Impersonating a bank customer to reset passwords or confirm a transaction

Synthetic “live” video: Fooling KYC platforms with AI-generated video that appears to blink, nod, and smile in real time

Synthetic identities: Created using breached PII and generative models to simulate legitimate user behavior

📍 Example – India

An Indian fintech reported a sudden spike in new customer sign-ups that passed video KYC but later triggered fraud alerts. Upon investigation, it was found that fraudsters were using AI-generated faces with dynamic expressions to pass automated onboarding. These fake accounts were then used to launder stolen crypto.

Phase 3: Crypto-Fueled Scams Become the Norm

The FBI’s 2025 Internet Crime Report shows a 22% year-over-year jump in online fraud losses, with crypto investment scams making up nearly $4.5 billion in the U.S. alone. Asia-Pacific tells a similar story.

The “pig butchering” scam—a long-term psychological manipulation con—is now rampant across Thailand, Taiwan, Vietnam, Malaysia, and Hong Kong. Victims are groomed through

WhatsApp, Facebook, or dating apps, slowly convinced to invest in what appears to be a legitimate trading platform.

What sets these scams apart is the digital intimacy they exploit—building trust over weeks or months before striking.

📍 Example – Singapore

A 2024 case involved a tech professional who was lured into a pig butchering scam over Telegram. The fake platform showed real-time charts, account balances, and a responsive support team. He lost over SGD 220,000 before realizing he was being duped. The scam was traced back to a compound in Laos, with funds routed through crypto mixers and eventually cashed out via mule accounts in Dubai.

Phase 4: Financial Crime Fighters Scramble to Adapt

With criminals moving faster than traditional fraud controls, institutions are rethinking their approach. The fraud battlefield has shifted from reactive monitoring to proactive, intelligence-led prevention.

🔍 What must change to catch up?

Perpetual KYC (pKYC) Static, once-off onboarding is obsolete. Banks must implement continuous identity verification, incorporating behavioral biometrics, device intelligence, and session analytics.

Behavioral Analytics > Identity Checks Criminals can fake a face, but not a pattern. Tracking typing speed, mouse movements, app usage rhythms, and device familiarity can detect bots and synthetic IDs before money moves.

Synthetic ID Risk Modelling Fraud risk engines need to assign higher risk weights to patterned anomalies such as identical device fingerprints, repeated geolocation spoofing, or ultra-fast application-to-transaction timelines.

Real-Time Cross-Border Intelligence Sharing APAC lacks a coordinated alert system akin to the EU’s FIU.net. A regional alliance focused on real-time intelligence, alerts, and blacklist sharing between regulators, telcos, banks, and crypto exchanges is now essential.

AI vs. AI AI-generated fraud requires AI-enabled defenses. Institutions are now deploying adversarial AI that stress-tests onboarding systems, real-time anomaly detection, and self-healing fraud engines that learn from each attempt.

Conclusion: A Global Crime, an Asian Epicenter

The scam economy has reached industrial scale, with Southeast Asia at its operational core and every corner of the globe in its crosshairs. Fraud has become more than a criminal act—it’s an organized, scalable, and shockingly effective business model.

But the response is gaining momentum. With enhanced detection frameworks, regional cooperation, and AI-powered fraud prevention, there is a growing arsenal of tools to disrupt the economics of online crime.

Final Thought:

What type of fraud do you think your organization is still underestimating? And how will you respond when a synthetic voice calls your call center, sounding exactly like your CEO?

Comments